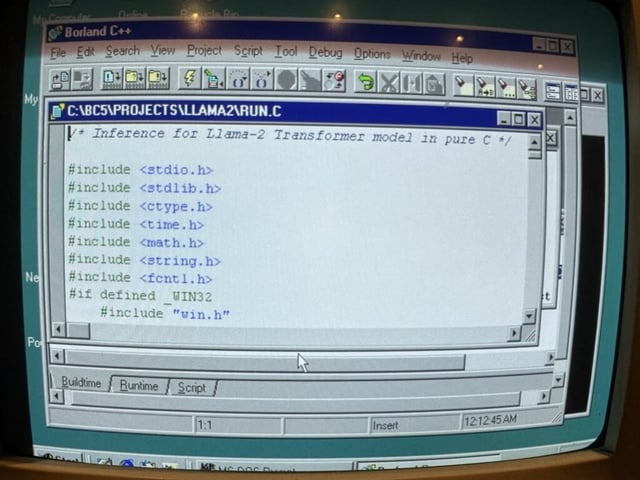

A team at EXO Labs, and allied open‑source enthusiasts, accomplished something that sounds impossible at first: they ran a Llama 2‑ based AI model on a Windows 98 system powered by a mere Intel Pentium II, clocked at 350 MHz, with just 128 MB of RAM

This demonstration shatters our assumptions about AI’s hardware demands by showing that even very small legacy systems can execute modern language models. It underscores the untapped potential of algorithmic efficiency over brute‑force hardware scaling.

Model Performance Overview

260K parameters

~35-39 tok/s

Surprisingly robust on old hardware

15M parameters

~1 tok/s

Noticeable slowdown

1B parameters

~0.0093 tok/s

Extremely slow/unusable

Enter BitNet: Leaner, Meaner, More Efficient

To push boundaries further, EXO Labs advocates BitNet, a transformer variant using ternary weights (values of -1, 0, or 1). This yields dramatic efficiency:

- A 7B‑parameter BitNet model can be compressed to ~1.38 GB, making it plausible to run on older CPUs.

- CPU‑first design, meaning no GPU required, and ~50% less energy usage compared to full‑precision models.

- EXO claims even 100B‑parameter models could run at human reading speed (≈ 5-7 tok/s) on a single CPU

Implications for the Future

Accessibility: AI becomes viable on low‑cost hardware. This democratizes access, especially in educational, non‑profit, or resource‑constrained settings.

Sustainability: Extending the lifecycle of old machines helps reduce e‑waste and energy consumption. It’s a win for both budgets and the planet.

Software-First: Instead of investing solely in more powerful chips, there’s increasing leverage in developing algorithmic efficiency, which can make AI lighter, greener, and more inclusive.

What EXO Labs achieved demonstrates that AI doesn’t have to reside in data centers equipped with GPUs. With clever engineering (like llama2.c on Borland C++), resource-aware model design (BitNet), and attention to hardware limitations, AI becomes truly portable even when it’s run on a machine nearly three decades old.

Check out more from EXO Labs: https://exolabs.net